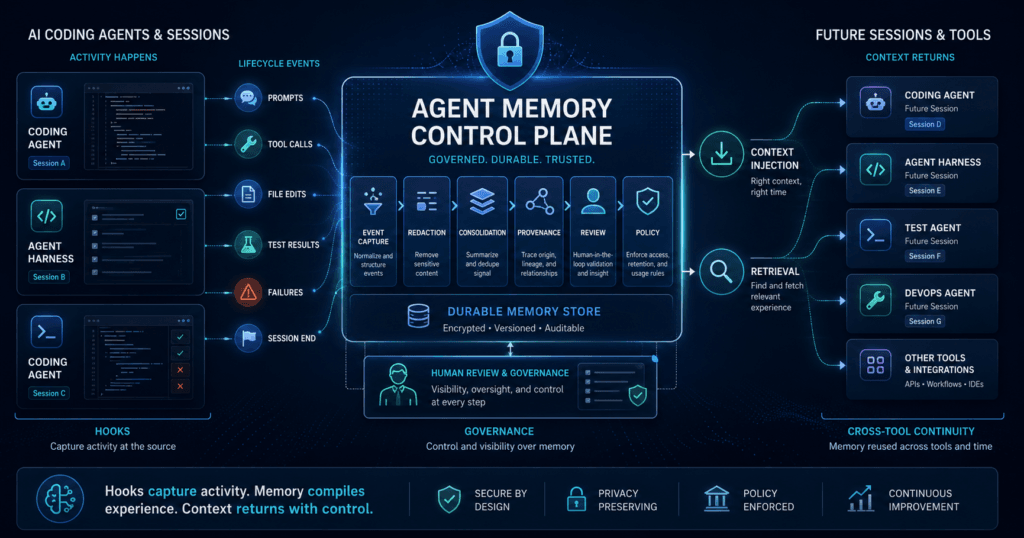

The agent memory control plane is becoming one of the most important missing layers in AI coding agent architecture: a user-owned system that observes agent work, turns useful history into durable memory, and injects that memory back into future sessions without depending on any single model or tool.

Every AI coding agent wakes up slightly amnesic.

It may read your repository. It may follow a CLAUDE.md, an AGENTS.md, a rules file, or a project instruction document. It may scan recent diffs and inspect the current task. But it usually does not carry a governed, portable, high-quality memory of how the project evolved, what the team decided last week, which approaches already failed, which files should not be touched, or why a certain architectural choice exists.

That limitation is easy to miss during demos. A coding agent can look impressive inside one task. It can explore a codebase, propose changes, run commands, and produce useful work. The weakness appears over time. The same context gets re-explained. The same mistakes recur. The same project conventions are rediscovered instead of remembered. The same business rules are scattered across prompts, tickets, files, and developer heads.

The obvious answer is to give the agent more context. That helps, but it is not the same as memory.

The better answer is to build an agent memory control plane: a layer outside the model and outside any single agent harness that captures important events, consolidates them into durable memory, governs what can be reused, and injects relevant context into future work.

That framing matters because the next fight in AI coding tools may not be only about model quality. It may be about who owns the memory.

Prompt Files Are Useful, But They Are Not Memory

Prompt files are valuable. A well-maintained project instruction file can tell an agent how the repository is organized, which commands to run, what coding standards to follow, and what architectural boundaries matter. Many teams should improve those files before they think about more advanced systems.

But prompt files have a hard limit: they are mostly static.

They represent what someone remembered to write down. They do not automatically learn from yesterday’s debugging session. They do not notice that three agents failed the same test for the same reason. They do not turn repeated corrections into reusable guidance. They do not preserve the difference between a durable architectural decision and a one-off workaround.

That is why treating prompt files as agent memory is a category mistake. A prompt file is an instruction surface. Memory is a learning surface.

A real memory system needs to answer different questions:

- What happened in previous sessions?

- Which decisions were made and why?

- Which patterns keep recurring?

- Which mistakes should not be repeated?

- Which memories are still valid?

- Which memories are sensitive and should not be reused automatically?

- Which memories belong to a person, a project, a team, or an organization?

Once those questions appear, the issue stops being a prompt-engineering problem and becomes a systems-design problem.

Why the Agent Memory Control Plane Matters Now

AI coding agents are becoming normal work tools. Claude Code, Codex, Cursor, and similar systems are not just chat boxes. They can read files, edit code, call tools, run commands, interact with repositories, and participate in multi-step workflows.

That means they create operational history.

For a business, that history has value. It contains project conventions, technical decisions, failure patterns, unresolved constraints, security boundaries, deployment assumptions, and workflow knowledge. If that history stays trapped inside chat transcripts, local tool state, or vendor-specific memory, the business does not really own it.

This is the hidden lock-in problem. Companies often think AI lock-in means model dependency. That is only part of it. The deeper lock-in may come from accumulated context: the memory of how people and agents worked together.

A team can switch models faster than it can reconstruct months of project context.

That is why the agent memory control plane deserves executive attention. It changes the question from “Which coding agent should we use?” to “Where should our agent memory live, and who controls how it is captured, governed, and reused?”

MCP Gives Agents Access to Memory. Hooks Give Memory Access to Agents.

The clearest way to understand the architecture is to separate MCP from hooks.

Model Context Protocol, or MCP, is an open protocol for connecting AI applications to external systems. MCP tools let models interact with databases, APIs, files, and other services. In the MCP tools specification, tools are described as model-controlled: the language model can discover and invoke tools based on context and the user’s prompt. The specification also notes that applications should make exposed tools clear and support human confirmation for operations.

That is useful. It gives agents a standard way to reach outside themselves.

But MCP alone does not solve memory. It gives the agent a way to ask for memory. The model still has to decide that memory is needed, select the right tool, ask the right question, and use the result properly.

Hooks solve a different problem.

Hooks run at defined lifecycle points. Claude Code’s hook documentation describes events that fire at session, turn, and tool-call cadences, including events such as session start, user prompt submission, pre-tool use, post-tool use, stop, compaction, and session end. OpenAI’s Codex documentation describes hooks as deterministic scripts that run during the Codex lifecycle and can support logging, prompt scanning, conversation summarization for persistent memories, validation, and prompt customization. Cursor describes hooks as a way for organizations to observe, control, and extend the agent loop using custom scripts before or after defined stages.

That is the distinction:

| Common Belief | Production Reality | Better Question |

|---|---|---|

| Prompt files are agent memory. | Prompt files are static instructions, not a record of what happened. | What should the system learn from completed sessions? |

| MCP solves memory. | MCP can expose memory, but the model may or may not retrieve it. | Which context should be injected automatically versus queried on demand? |

| Bigger context windows fix continuity. | Long context can still be noisy, expensive, stale, or poorly used. | What high-signal memory should survive across tools and sessions? |

MCP gives agents access to memory. Hooks give memory access to agents.

That sentence is the heart of the agent memory control plane.

Hooks Are the Agent Event Bus

Hooks matter because they are deterministic.

They can fire when a session starts, when a prompt is submitted, before a shell command runs, after a file is edited, when a tool fails, when a session stops, or when context is compacted. The exact events vary by tool, and that nuance matters. Claude Code, Codex, and Cursor do not expose identical hook systems. Codex hooks are documented behind a feature flag in current OpenAI documentation. Cursor’s public hook materials emphasize organizational control and security integrations. Claude Code has a broad documented lifecycle with many event types.

So the correct claim is not that hooks are a universal standard.

The correct claim is that hook-style lifecycle control is becoming a cross-harness design pattern for agent observability, governance, and memory capture.

That pattern creates a practical event bus around the agent. Instead of hoping the model remembers to store something, the system can observe what happened:

- A session started in a specific repository.

- A user submitted a task.

- The agent read certain files.

- The agent called a tool.

- A command failed.

- A patch changed certain modules.

- A test passed or failed.

- A human denied a risky action.

- The session ended with a useful result.

- Context was compacted or summarized.

Those events are not memory by themselves. They are raw material.

A flight recorder is not a pilot. But without the flight recorder, you cannot reconstruct what happened with confidence.

Raw Logs Are Not Memory

This is where many agent-memory ideas become sloppy. Saving every event is not the same as remembering well.

Raw logs are too noisy. They contain repeated information, temporary details, failed guesses, sensitive data, command output, credentials if teams are careless, and stale assumptions. If a company stores everything and blindly reinjects it later, it may make the agent worse rather than better.

A useful agent memory control plane needs a consolidation layer.

That layer should turn event history into structured memory. It should summarize, deduplicate, classify, and score what was learned. It should separate different memory types: recent working context, durable project facts, user preferences, coding conventions, decisions, reasoning traces, and tool usage patterns.

Neo4j’s agent memory work is useful here because it frames memory as more than a pile of notes. Its public materials describe short-term memory for conversations, long-term memory for entities, preferences, and facts, and reasoning memory for traces and tool usage. Whether a team uses Neo4j or another store, the mental model is right: memory needs structure, relationships, provenance, and retrieval discipline.

A good memory layer should produce statements like:

- This repository treats generated files as read-only.

- This project uses a specific background-job pattern.

- Previous attempts to upgrade a dependency failed because of a version conflict.

- Production deployment requires a human approval step.

- This team prefers small, isolated pull requests.

- This API should not be called from the client layer.

- This test suite must run before marking refactors complete.

Those memories are more useful than a transcript dump because they are compressed, reviewed, and reusable.

Injection Is Different From Retrieval

Most AI memory discussion focuses on retrieval: the agent searches for context when it needs it.

Retrieval is important. It keeps active context smaller and gives the agent access to a broader memory store. But retrieval has a weakness: the model has to know when to ask.

Memory injection solves a different problem. It provides relevant memory at the start of the session or at key lifecycle moments, before the model has to infer that it is missing something.

The distinction is practical.

Retrieval asks: “Should I go look something up?”

Injection says: “Here is what you need before you begin.”

A mature agent memory control plane will use both. It may inject a short project memory at session start, add task-specific memory when the user prompt arrives, and still expose deeper memory through MCP tools when the agent needs more detail. The point is not to choose hooks over MCP. The point is to put them in the right roles.

Hooks capture and inject at reliable moments. MCP enables dynamic access when the model needs more.

Together, they make agent memory less dependent on chance.

The Security Boundary Nobody Should Ignore

Hooks are powerful because they sit close to the work. That also makes them sensitive.

Claude Code’s documentation warns that command hooks run with the user’s full system permissions and can modify, delete, or access files the user account can access. Its security guidance recommends validating inputs, quoting shell variables, blocking path traversal, using absolute paths, and skipping sensitive files. Cursor’s hook materials describe integrations for MCP governance, code security, dependency security, agent safety, and secrets management.

This is exactly why the agent memory control plane should be treated as infrastructure, not a clever productivity script.

A memory hook may see prompts, tool inputs, command text, file paths, edited code, test output, and session summaries. In the wrong design, it can collect secrets, regulated data, customer information, proprietary code, or unreviewed model guesses. In the right design, it can redact sensitive data, preserve audit trails, enforce policy, and prevent repeated mistakes.

The difference is governance.

| Decision Area | Why It Matters | What Can Go Wrong |

|---|---|---|

| Memory capture | Determines what becomes reusable context. | Sensitive data, noisy logs, or stale assumptions get stored. |

| Memory consolidation | Turns raw events into useful knowledge. | Bad summaries become false project facts. |

| Memory injection | Decides what the agent sees automatically. | Irrelevant or unsafe context steers future work. |

| Access control | Separates personal, project, team, and organizational memory. | One team’s context leaks into another team’s work. |

| Retention | Limits how long memories survive. | Old decisions keep influencing new work after conditions change. |

For business leaders, the uncomfortable truth is simple: memory is not just a capability. It is a control surface.

What Leaders Should Fund

The executive version of this argument is not “buy a graph database” or “install hooks everywhere.”

The executive version is: do not let valuable agent memory accumulate accidentally.

Leaders should fund the control layer around agentic tools. That includes observability, event capture, memory storage, redaction, review, evaluation, and policy enforcement. Tool subscriptions alone are not an operating model.

A practical pilot does not need to start with highly autonomous production changes. Start with lower-risk workflows where memory failure is visible but the downside is limited: repository orientation, documentation updates, test repair, dependency investigation, internal tooling, or coding-standard enforcement.

Then measure the right things:

- Are agents repeating fewer known mistakes?

- Are developers re-explaining less context?

- Are project conventions followed more consistently?

- Are memory entries traceable to actual events?

- Are sensitive items being excluded or redacted?

- Are old memories reviewed or expired?

- Are teams able to move memory across tools?

If the answer is no, the system is not yet a memory control plane. It is just another logging layer.

What Builders Should Verify

For engineers, the design challenge is not only capture. Capture is the easy part.

The hard part is memory quality.

Technical teams should verify that the system can distinguish durable knowledge from temporary output. A failed experiment should not become a permanent rule. A single developer preference should not become a team standard. A stale dependency note should not override current documentation. A model-generated explanation should not become trusted memory unless it is grounded in files, tests, decisions, or human review.

A useful implementation should preserve provenance. Every durable memory should be able to answer: Where did this come from? When was it created? Who or what approved it? What evidence supports it? When should it expire?

That may sound heavy, but without those controls, persistent AI agent memory becomes a liability. It gives future agents confidence without accountability.

The better pattern is staged:

- Capture raw lifecycle events.

- Redact and filter sensitive material.

- Consolidate high-signal events into candidate memories.

- Review or automatically approve only low-risk memory categories.

- Store memory with provenance and scope.

- Inject only relevant memory at defined lifecycle moments.

- Evaluate whether memory improves outcomes over time.

That is the operating discipline behind the agent memory control plane.

A Better Mental Model: Memory as a Compiler

The strongest mental model is not “the agent has a notebook.”

It is “the system compiles experience into memory.”

Raw agent events are like source material. They are messy, verbose, and context-dependent. The memory layer compiles that material into smaller, structured, higher-signal artifacts that future sessions can use.

The pipeline looks like this:

Raw agent events

→ Hook-based event log

→ Memory consolidation

→ Structured project and user memory

→ Context injection into any harness

→ Optional MCP querying for deeper recall

That model is useful because it separates activity from knowledge. An agent doing something is not the same as the organization learning something. The memory control plane is the layer that decides which experiences deserve to become reusable knowledge.

It also keeps the business conversation grounded. Bigger models may help. Better tools may help. Larger context windows may help. But none of those automatically answer the ownership question.

Who owns the memory created by AI work?

If the answer is “whatever the current tool happens to store,” the organization has not made an architecture decision. It has inherited one.

The Future Belongs to User-Owned Agent Memory

The future of AI coding agents will not be decided only by which model writes the best function on the first try. Serious teams will care about continuity, security, auditability, cost, portability, and institutional learning.

That pushes memory outside the chat box.

An agent memory control plane does not require pretending agents are autonomous employees. It requires treating them as operational systems that create traces, decisions, and reusable context. Hooks make those traces observable. Consolidation turns them into memory. Injection makes the memory available at the right time. MCP can extend the system with deeper retrieval.

The result is not magic. It is better architecture.

The companies that get this right will not be the ones that merely collect the most agent logs. They will be the ones that decide what should be remembered, what should be forgotten, what should be reviewed, and what should follow the team across tools.

The most valuable memory in an AI workflow is not what the model remembers. It is what the system can verify, govern, and reuse.

Key Takeaways

- Prompt files are useful instructions, but they are not durable agent memory.

- MCP gives agents a way to access external tools and memory, but retrieval still depends on model behavior.

- Hooks create deterministic lifecycle touchpoints for observation, logging, validation, policy enforcement, and memory capture.

- An agent memory control plane should live outside any single model, IDE, or coding-agent harness.

- Raw logs are not memory; useful memory requires consolidation, redaction, provenance, and review.

- Memory injection and memory retrieval solve different problems and should work together.

- Agent memory is a security and governance boundary, not just a productivity feature.

- The real business question is who owns the context created by AI-assisted work.

Practical Decision Framework

Use this framework before scaling persistent AI agent memory across a team.

| Decision Area | What to Ask | What to Measure |

|---|---|---|

| Ownership | Does memory belong to the user, the team, the company, or the vendor tool? | Share of reusable context stored outside a single agent harness. |

| Capture | Which lifecycle events are worth recording? | Signal-to-noise ratio in captured events and reduction in repeated rework. |

| Consolidation | How are raw events turned into trusted memory? | Memory acceptance rate, correction rate, stale-memory rate, and provenance coverage. |

| Injection | What context should be inserted automatically? | Improvement in task success, fewer repeated mistakes, and lower re-explanation burden. |

| Governance | What must be redacted, reviewed, expired, or blocked? | Sensitive-data capture rate, review coverage, retention compliance, and audit completeness. |

| Portability | Can memory move across Claude Code, Codex, Cursor, or future tools? | Number of supported harnesses and percentage of memory stored in neutral infrastructure. |

Leaders should fund the control layer, not just the agent license. Product teams should pilot memory on low-risk workflows before using it around production changes. Engineering teams should verify provenance, redaction, access control, and measurable quality improvement. Security teams should treat memory capture as a data-handling surface. Operators should measure whether the agent stops repeating known mistakes.

The initiative is working only if memory improves continuity without weakening control.

FAQ

What is an agent memory control plane?

An agent memory control plane is an external layer that captures AI agent lifecycle events, consolidates useful history into durable memory, governs what can be reused, and injects relevant context into future sessions across tools.

Why are prompt files not enough for AI agent memory?

Prompt files are static instructions. They can describe project rules, but they do not automatically learn from previous sessions, failed attempts, tool calls, human corrections, or recurring workflow patterns.

How are hooks different from MCP tools?

MCP tools let models access external systems when the model chooses to call them. Hooks run at defined lifecycle points, such as before tool use, after tool use, session start, or session stop. That makes hooks useful for deterministic observation and control.

Should every company build persistent AI agent memory?

No. Teams should start only where repeated context loss is costly and where memory can be governed safely. Low-risk engineering workflows, documentation work, test repair, and repository orientation are better starting points than sensitive production operations.

What are the biggest risks of agent memory?

The biggest risks are storing sensitive data, preserving false or stale conclusions, injecting irrelevant context, creating new security exposure, and allowing one tool or vendor to control strategically valuable workflow memory.

Do hooks replace MCP?

No. Hooks and MCP solve different problems. Hooks are useful for lifecycle capture, policy checks, and memory injection. MCP is useful for exposing tools, data, and deeper retrieval. A serious architecture may use both.

Sources

- Anthropic Claude Code Hooks Reference: https://code.claude.com/docs/en/hooks

- Anthropic Claude Code Hooks Guide: https://code.claude.com/docs/en/hooks-guide

- OpenAI Codex Hooks: https://developers.openai.com/codex/hooks

- OpenAI Codex Advanced Configuration: https://developers.openai.com/codex/config-advanced

- Cursor Hooks for Security and Platform Teams: https://cursor.com/blog/hooks-partners

- Model Context Protocol Tools Specification: https://modelcontextprotocol.io/specification/draft/server/tools

- Neo4j Agent Memory: https://neo4j.com/labs/agent-memory/

- Neo4j Agent Memory Types: https://neo4j.com/labs/agent-memory/explanation/memory-types/

- Google Search Essentials: https://developers.google.com/search/docs/essentials

Related articles from Kyle Beyke

- Building Brilliant Modern Agentic AI Systems for Business: https://kylebeyke.com/how-modern-agentic-ai-systems-are-built-for-business/

- 9 BEST Agentic AI Business Tips: https://kylebeyke.com/9-best-agentic-ai-business-tips/

- Why Context Windows, Hallucinations, and Memory Limits Still Break Modern LLMs: https://kylebeyke.com/llm-context-window-hallucination-memory-limitations/