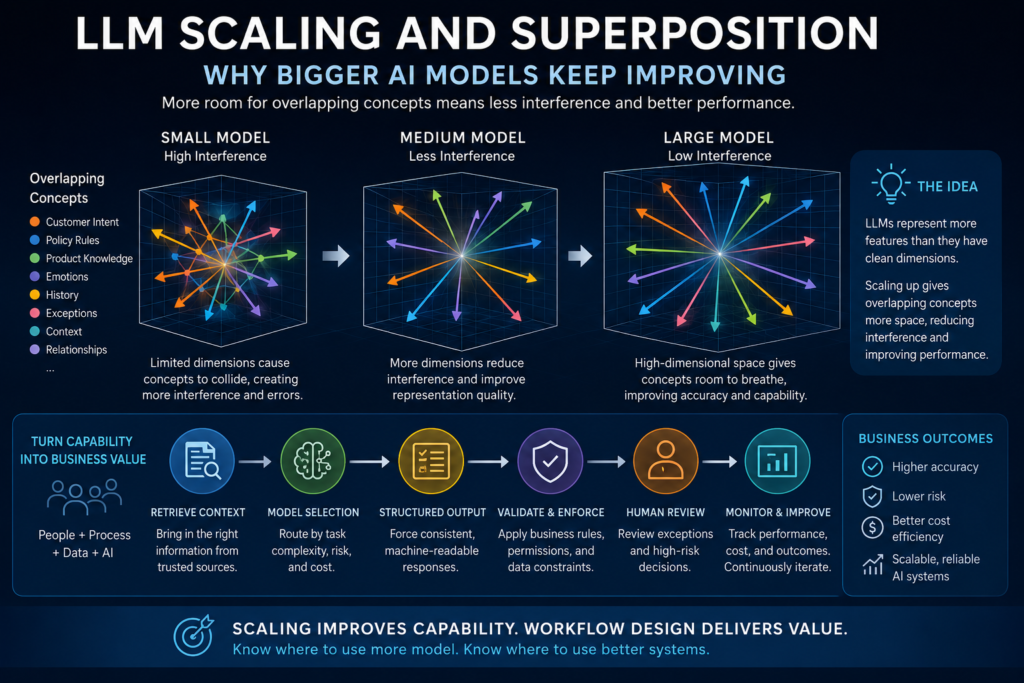

LLM scaling works for deeper reasons than “more parameters means more intelligence.” MIT’s superposition research suggests that bigger models improve partly because they give overlapping concepts more room to interfere less, but businesses only turn that capability into value when they apply it to the right workflows.

Bigger AI models work for a reason

LLM scaling is often discussed in the least useful way possible.

One side says bigger AI models are obviously better. The other side says smaller models are catching up and the scaling era is over. Both arguments miss the more interesting question: why does making a model bigger keep improving its performance so reliably in the first place?

That question matters to researchers, but it also matters to businesses. Executives do not need to memorize neural scaling equations. Product leaders do not need to become mechanistic interpretability researchers. Engineering managers do not need to explain every vector-space detail to choose a model for a support workflow, document review assistant, product content system, or internal knowledge tool.

But they do need a better mental model than “bigger is smarter.”

A 2025 MIT paper, Superposition Yields Robust Neural Scaling, offers one of the clearer explanations for why LLM scaling may work so reliably. The paper argues that large language models represent more features than they have clean internal dimensions. Those features overlap. That overlap creates interference. When models get wider, the interference between overlapping representations can shrink, producing more reliable improvement as scale increases. The paper’s authors describe this as representation superposition contributing to neural scaling, and they report that analyzed open-source LLM families exhibit strong superposition consistent with their toy model’s predictions.

In plain English: bigger models may improve because crowded ideas get more room.

That is a powerful idea. It explains why scaling is not just brute force. It also explains why scale is not magic. A larger model can have more representational capacity, but it still does not automatically know your company’s private policies, verify facts, follow permissions, manage risk, or fit neatly into a business workflow.

The practical lesson is simple: scaling improves capability. It does not replace system design.

What LLM scaling means in plain English

LLM scaling refers to the observed pattern that language model performance often improves predictably as model size, training data, and compute increase. The classic scaling-laws work from Kaplan and colleagues found that language model loss follows power-law relationships with model size, dataset size, and compute over large ranges.

That does not mean every bigger model is better for every task. It means that, in broad empirical terms, scale has been a reliable way to reduce loss and improve capability.

Later work from DeepMind’s Chinchilla paper complicated the simple “just make the model bigger” story. That research argued that many large language models had been undertrained relative to their size and that compute-optimal training requires scaling both model size and training data. In other words, bigger is not enough; the relationship between parameters, data, and compute matters.

For businesses, this is already more nuanced than most model-selection conversations.

The practical question is not:

“Should we use the biggest model?”

The better question is:

“What kind of capability does this workflow need, and what is the cheapest reliable way to get it?”

The MIT superposition paper adds another layer. It asks why scaling laws appear so robust. Why does adding model width often help? Why does loss fall in such regular patterns? Why do large models seem to absorb so many more concepts, associations, patterns, and edge cases?

The proposed answer is not that models become conscious, intentional, or magically intelligent. The proposed answer is geometric.

Superposition: when a model has more concepts than clean slots

Superposition is one of those technical terms that sounds abstract until you translate it into a capacity problem.

A model has internal dimensions. It also has to represent features: words, fragments, meanings, associations, facts, styles, patterns, relationships, syntax, concepts, and combinations of concepts. The number of useful things the model might need to represent is far larger than the number of clean, isolated internal slots available.

So the model packs representations together.

That is superposition: representing more features than there are independent dimensions by allowing them to overlap.

The idea has been studied in interpretability research, including Anthropic’s Toy Models of Superposition, which explored how neural networks can store additional sparse features in superposition and how that connects to polysemanticity, where a neuron or representation participates in multiple meanings rather than one clean concept.

A simple analogy helps.

Imagine a warehouse with fewer shelves than products. If you only store the most popular products, your system is clean but incomplete. If you store everything by letting categories overlap, the warehouse becomes more useful, but retrieval gets messier. The question is whether the overlap creates manageable noise or destructive confusion.

Language models face a similar problem. They need to represent a huge number of concepts, and many of those concepts are sparse. They do not all appear in every input. They appear unevenly, in different combinations, with different frequencies. Some are common. Some are rare. Some are clean. Some are ambiguous. Some collide with others.

A small model has less room. Concepts overlap more aggressively. That can create interference.

A larger model has more room. Concepts can still overlap, but the overlap may become less damaging.

That is the important business translation: model size is not just a vanity metric. It is capacity for representing complexity.

What MIT’s paper adds to the scaling debate

The MIT paper starts from two empirical principles: language models represent more features than they have dimensions, and language features occur with uneven frequencies. From there, the authors build a toy model to study how loss changes with model size under different degrees of superposition.

The distinction between weak and strong superposition is central.

In weak superposition, only the most frequent features are represented cleanly, while less frequent features are ignored or poorly represented. In that regime, loss depends heavily on the distribution of feature frequencies. If the ignored features follow a power-law distribution, the loss can also follow a power law.

In strong superposition, many more features are represented, but they overlap. Instead of loss coming mostly from ignored features, loss comes from interference among represented features. The MIT paper argues that this interference can shrink roughly with model dimension because of geometry: when many vectors are packed into a higher-dimensional space, their squared overlaps tend to get smaller. The paper summarizes this as robust “one over width” scaling in the strong superposition regime.

That is the core idea.

The model improves not simply because it has more stuff inside it. It improves because the stuff inside it collides less destructively.

This is why the paper is useful outside research circles. It gives business readers a better explanation for why bigger models often handle ambiguity, nuance, and concept-heavy tasks better. They may have more room to separate overlapping ideas.

A customer email that mixes anger, a billing dispute, a damaged shipment, a policy exception, and a loyalty concern is not just a “text classification” problem. It contains overlapping signals. A legal clause summary that depends on definitions, exceptions, references, and implied obligations is not just a summarization task. A merchandising decision that involves vendor history, product taxonomy, seasonality, margin, and customer intent is not just extraction.

These are concept-collision problems.

Bigger models may do better because they can represent more of those overlapping concepts with less internal interference.

Why this does not mean bigger models are always better

The wrong conclusion is obvious: if bigger models reduce interference, just use the biggest model everywhere.

That is not strategy. That is defaulting.

Scaling can improve capability, but it does not solve every production AI problem. A larger model does not automatically become truthful. It does not automatically retrieve the right business document. It does not automatically know which user has permission to see which data. It does not automatically produce valid JSON. It does not automatically route an exception to a qualified human. It does not automatically make the workflow auditable.

Those are system-design problems.

The MIT paper is careful about its own limits. It focuses on representation loss and notes that LLMs also have other sources of loss, including processing inside transformer layers. The paper also describes future research directions rather than claiming that superposition explains all aspects of model behavior.

Businesses should be equally careful.

Bigger models can help when the task itself is conceptually complex. They are less useful when the main problem is bad data, missing context, weak instructions, poor workflow design, no evaluation set, unclear ownership, or lack of validation.

A larger model may write a better answer from the wrong source. It may produce a more fluent hallucination. It may make a subtle policy mistake sound confident. It may still fail to follow a business rule that should have been enforced outside the model.

That is the difference between model capability and operational reliability.

Capability lives in the model.

Reliability lives in the system.

The business question behind superposition

The business question is not whether LLM scaling works. The evidence says scaling has been a powerful driver of progress, even if the economics and limits remain contested.

The business question is where scaling should be purchased.

That question gets easier when you think in terms of representational complexity.

Use larger models when the task contains many overlapping concepts and the cost of misunderstanding them is high. Use smaller models when the task is narrow, structured, repetitive, or easy to validate.

Here is a practical way to think about it:

| Common Belief | Production Reality | Better Question |

|---|---|---|

| Bigger models are better because they are simply smarter. | Bigger models may improve because they reduce interference among overlapping representations. | Does this task need more representational capacity? |

| Scaling means workflow design matters less. | Scaling improves capability, but workflows still need retrieval, validation, permissions, and monitoring. | What must the system guarantee beyond model output? |

| Smaller models are always more efficient. | Smaller models are efficient only when they pass task-specific evaluations and do not create rework. | What is the cost per successful outcome? |

This is where the social media debate usually goes wrong. People argue about model size in isolation. Real organizations do not use model size in isolation. They use AI systems to complete work.

A model that is cheaper per call may become expensive if it creates retries, escalations, rework, customer friction, or manual cleanup. A model that is more expensive per call may be cheaper overall if it handles hard cases correctly, reduces review burden, and avoids costly errors.

The right metric is not simply price per token.

The better metric is cost per successful outcome.

Where bigger models are worth considering

Bigger models deserve serious consideration when the work has high conceptual overlap.

Examples include:

- Ambiguous customer communications where emotion, policy, product context, and account history interact.

- Complex document review where obligations, exceptions, definitions, and references must be interpreted together.

- Multi-step analysis where the model must synthesize several inputs into a judgment or recommendation.

- High-value drafting where tone, accuracy, structure, and domain nuance matter.

- Difficult classification where labels are not obvious and categories overlap.

- Internal decision support where the model must compare tradeoffs rather than extract a simple field.

In these cases, a larger model may be worth the additional cost because the task requires capacity for nuance.

But the decision still needs evidence. Do not assume the larger model wins. Test it.

Build an evaluation set with real examples. Include normal cases, messy cases, rare cases, and high-risk cases. Compare small, medium, and large models. Measure not only “quality” in a vague sense, but the specific failure modes that matter:

- Did the model identify the right concept?

- Did it handle ambiguity correctly?

- Did it follow the business rule?

- Did it cite or use the right context?

- Did it produce a valid structured output?

- Did it reduce human correction?

- Did it reduce escalation?

- Did it justify the additional cost?

The point of LLM scaling research is not to make evaluation unnecessary. It is to help teams understand why larger models may perform better on certain kinds of tasks.

Where smaller models and workflow controls may win

Smaller models can be the better choice when the problem is constrained.

Examples include:

- Routine classification with a stable taxonomy.

- Simple extraction from predictable documents.

- Format normalization.

- Low-risk summaries of short, structured inputs.

- Routing decisions with clear labels.

- Repetitive internal transformations where validation is easy.

In these workflows, the best answer may not be “use a larger model.” The best answer may be:

- retrieve better context;

- define a clearer schema;

- use structured outputs;

- add validation rules;

- route uncertain cases to a larger model;

- use human review for exceptions;

- cache repeated inputs;

- reduce unnecessary prompt length;

- monitor failure patterns.

That is how mature AI systems should work.

A strong architecture does not use one model for everything. It routes work based on difficulty, risk, cost, and required capability.

The easy cases should not pay the frontier-model tax. The hard cases should not be forced through a model that lacks enough capacity to handle them.

What engineers and developers need to build around

For engineering teams, the practical implication of superposition is not “switch everything to bigger models.”

It is “design systems that know when more capacity is needed.”

That usually means model routing.

A production workflow might use a smaller model for routine classification, a medium model for uncertain cases, and a larger model for cases involving ambiguity, long context, multi-document synthesis, or high failure cost.

But routing only works if the system can tell when a task is easy or hard. That requires instrumentation.

A useful workflow should track:

- input complexity;

- confidence or uncertainty signals;

- validation failures;

- retry frequency;

- human correction rate;

- escalation rate;

- latency;

- cost per workflow;

- cost per successful outcome;

- quality lift from stronger models.

The team should also separate model capability from workflow guarantees.

Retrieval provides context. Structured outputs provide format. Validation checks schema and business rules. Permissions restrict data access. Human review handles risk. Observability shows what happened. Evaluation determines whether changes improve the workflow.

The model is one component, not the whole system.

MIT’s paper helps explain why bigger components may be stronger. It does not remove the need for the rest of the machine.

What leaders should take from the MIT paper

The executive takeaway is not technical trivia. It is purchasing discipline.

If bigger models improve because they reduce interference among overlapping representations, then larger models should be reserved for work where overlapping representations are the problem.

That means leaders should stop asking:

“Do we need the best model?”

They should ask:

“Where does our work contain enough ambiguity, context, and concept overlap that more model capacity changes the outcome?”

That question changes the AI roadmap.

It pushes teams away from generic chatbot deployment and toward workflow analysis. It forces the business to identify where errors are expensive, where judgment matters, where smaller models break, and where structure can reduce uncertainty.

| Decision Area | What to Ask | What to Measure |

|---|---|---|

| Conceptual complexity | Does the task involve many overlapping concepts, ambiguous intent, or nuanced judgment? | Accuracy on difficult cases, edge-case failure rate, human correction rate |

| Model-size justification | Does a larger model materially improve the outcome? | Quality lift, cost increase, latency impact, successful task completion |

| Workflow control | Can structure reduce the need for raw model capability? | Validation pass rate, schema compliance, retry rate, escalation rate |

| Risk and review | What happens if the model is wrong? | Error severity, review burden, customer impact, compliance exposure |

| Routing strategy | Can easy work go to smaller models and hard work go to larger models? | Cost per successful outcome, quality by route, latency by route |

This is a better leadership model than “AI-first” or “use the latest model.”

It treats AI capability as capital. Spend it where it compounds. Conserve it where structure is enough.

A practical example: support operations

Consider a customer support workflow.

A customer writes: “I ordered two lamps, one arrived broken, your tracking page says both were delivered, and this is the third issue I’ve had this month. I want this fixed before my dinner party Friday.”

A narrow classifier might identify “damaged item.” That is not wrong, but it is incomplete.

The message also includes urgency, shipment inconsistency, customer frustration, account history, possible retention risk, and a time-sensitive resolution window. Those signals overlap. A larger model may be better at synthesizing them into a useful support recommendation.

But that larger model still needs system support.

The workflow should retrieve order data, shipment status, customer history, policy rules, replacement availability, and escalation thresholds. The model should not invent those facts. It should use retrieved context. The output should be structured. Business rules should validate refund or replacement eligibility. High-risk exceptions should route to a human.

This is the pattern:

Use model capacity for ambiguity.

Use workflow design for control.

A practical example: product data and merchandising

Now consider product attribute extraction.

If the task is to extract color, material, dimensions, and category from a clean vendor sheet, a smaller model with a schema may be enough. The output can be validated against allowed values. Errors are visible. The workflow is constrained.

But suppose the task is to interpret messy vendor communication across multiple emails, spec sheets, seasonal notes, and pricing exceptions. Now the model must connect product attributes, supplier context, merchandising intent, shipping constraints, customer-facing language, and category rules.

That is a different task.

The first task is structured extraction.

The second task is concept-heavy synthesis.

The second task may justify a larger model because the representational complexity is higher. But even then, the system should retrieve source documents, cite evidence, validate structured fields, and route uncertain cases for review.

Again: scaling helps with capacity. It does not replace the workflow.

The better operating model

The best operating model is straightforward:

Spend model capacity where interference is expensive.

Use workflow control where structure is available.

That means:

- Use larger models for ambiguity, synthesis, reasoning, and high-value judgment.

- Use smaller models for narrow, repetitive, structured work that passes evaluation.

- Use retrieval when the model needs facts outside its training.

- Use structured outputs when downstream systems need reliable fields.

- Use validation when outputs must meet business rules.

- Use human review when accountability, risk, or uncertainty is high.

- Use monitoring when the system is expected to improve over time.

This avoids both extremes.

It avoids the expensive mistake of using frontier models for every task. It also avoids the cheap mistake of forcing small models into work they cannot reliably handle.

Scaling is a mechanism, not a strategy

The MIT paper makes LLM scaling more understandable. That is its business value.

It helps explain why bigger models keep improving: they can represent more overlapping features with less damaging interference. That is not a small insight. It gives leaders, builders, and AI enthusiasts a better way to think about model capability.

But the conclusion should be disciplined, not breathless.

Bigger models are not magic. Smaller models are not automatically efficient. Scaling laws are not a deployment plan. Superposition is not a business case by itself.

The companies that benefit most from AI will not simply buy the biggest model available. They will understand why bigger models work, where that extra capacity matters, and how to surround the model with retrieval, validation, routing, human review, and measurement.

LLM scaling explains why capability improves.

Workflow design determines whether that capability becomes business value.

Key Takeaways

- LLM scaling is not just brute force; MIT’s superposition research suggests larger models may improve because they reduce interference between overlapping internal representations.

- Superposition means a model represents more features than it has clean independent dimensions by allowing representations to overlap.

- Bigger models may help most when tasks involve ambiguity, nuance, synthesis, multi-step reasoning, or many overlapping concepts.

- Scaling improves capability, but it does not automatically provide truthfulness, safety, permissions, validation, or workflow reliability.

- Businesses should evaluate model size by cost per successful outcome, not just price per token or benchmark rank.

- Smaller models can be the better choice for narrow, structured, repetitive, and easily validated tasks.

- The strongest production systems route work by task complexity, risk, latency, cost, and quality requirements.

- Scaling is a mechanism. Business value comes from matching model capacity to workflow design.

Practical Decision Framework

Use this framework when deciding whether to pay for a larger model.

- Identify the conceptual complexity

Ask whether the task involves overlapping concepts, ambiguous intent, messy context, or nuanced judgment. If the task is simple extraction or stable classification, a larger model may not be necessary. - Define the failure cost

A larger model is easier to justify when mistakes affect customers, money, compliance, trust, or high-value decisions. If errors are low-risk and easy to catch, workflow controls may be enough. - Compare models on real examples

Do not rely only on public benchmarks. Test small, medium, and large models on actual or representative business cases. - Measure quality lift

Track whether the larger model reduces real failure modes: wrong classifications, poor synthesis, invalid outputs, human corrections, escalations, or rework. - Measure total cost

Calculate cost per successful outcome, including model calls, retries, latency, human review, rework, and downstream errors. - Add workflow controls

Use retrieval, structured outputs, validation, permissions, logging, and escalation paths. These controls remain necessary even with larger models. - Route by difficulty

Send narrow, low-risk, validated work to smaller models. Send ambiguous, high-value, high-risk, or synthesis-heavy work to stronger models. - Revisit the decision regularly

Model capabilities, pricing, workflow requirements, and failure patterns change. Model selection should be monitored, not frozen.

FAQ

What is LLM scaling?

LLM scaling is the observed pattern that language model performance often improves predictably as model size, training data, and compute increase. Scaling laws describe these relationships, often using power-law patterns between loss and scale.

What is superposition in AI?

Superposition is when a neural network represents more features than it has clean independent dimensions by allowing internal representations to overlap. This can let models store more information, but overlap can also create interference.

Why do bigger AI models improve?

Bigger AI models may improve partly because larger internal representations give overlapping concepts more room. MIT’s superposition research suggests that in strong superposition, interference between representations can shrink with model width, creating more reliable scaling behavior.

Does this mean businesses should always use bigger models?

No. Bigger models can be useful for ambiguous, complex, high-value tasks, but they are often unnecessary for narrow, structured, repetitive, or easily validated work. Businesses should evaluate model size based on task complexity, failure cost, latency, and cost per successful outcome.

Does scaling make AI systems reliable?

No. Scaling can improve model capability, but production reliability still requires retrieval, validation, structured outputs, permissions, monitoring, human review, and clear workflow design.

How should businesses apply MIT’s superposition research?

Businesses should use the research as a mental model for capacity. Larger models are more likely to help when a task involves many overlapping concepts or subtle distinctions. For simpler tasks, workflow structure and smaller models may be more efficient.

Sources

- Superposition Yields Robust Neural Scaling: https://arxiv.org/abs/2505.10465

- Superposition Yields Robust Neural Scaling HTML Version: https://arxiv.org/html/2505.10465v4

- Scaling Laws for Neural Language Models: https://arxiv.org/abs/2001.08361

- Training Compute-Optimal Large Language Models: https://arxiv.org/abs/2203.15556

- Toy Models of Superposition: https://transformer-circuits.pub/2022/toy_model/index.html

- Toy Models of Superposition on arXiv: https://arxiv.org/abs/2209.10652

Related articles from Kyle Beyke

- LLM Scaling: 7 Hard Lessons for Business: https://kylebeyke.com/llm-scaling-lessons-business-ai/

- AI Model Selection: Powerful Guide for Smart Business AI: https://kylebeyke.com/ai-model-selection-business-ai-guide/

- How LLMs Work: Essential Guide for Builders: https://kylebeyke.com/how-llms-work-builders-guide/

- AI Token Costs: The Hidden Incentive Problem: https://kylebeyke.com/ai-token-costs-hidden-incentive-problem/

- AI Workflow Anatomy: Essential Guide for Business: https://kylebeyke.com/ai-workflow-anatomy-business-guide/

- Structured Outputs for AI Workflows: Reliable Guide: https://kylebeyke.com/structured-outputs-for-ai-workflows-guide/