Self-hosting AI is not a maturity badge. It is an operational commitment that only makes sense when control, cost, privacy, latency, customization, or compliance requirements justify the infrastructure and responsibility.

Should Your Business Self-Host AI? A Practical Framework

A lot of businesses are asking whether they should self-host AI.

That is the wrong first question.

The better question is: what are you trying to control?

Privacy? Cost? Latency? Compliance? Customization? Vendor dependence? Reliability? Data residency? Quality? Each answer points to a different deployment strategy. A small consulting firm testing AI-assisted document review does not need the same setup as a hospital, bank, manufacturer, software company, or government contractor. A customer support classifier does not need the same model architecture as a legal review workflow. A local prototype running on a workstation is not the same thing as a secure, monitored, multi-user production system.

Self-hosting AI sounds responsible. It sounds safer, cheaper, and more independent. Sometimes it is. Often, it is none of those things at first.

The honest answer is that most businesses should not begin with self-hosting. They should begin with a governed workflow, clear data rules, measurable use cases, and a deployment model that matches the business risk. For many organizations, that means a managed AI platform. For some, it means private cloud or a dedicated endpoint. For a smaller number, it means cloud-hosted open-weight models, on-prem GPU servers, edge models, or a hybrid routing architecture.

The goal is not to be pro-cloud or pro-self-hosting. The goal is to place each AI workload where it belongs.

Why the self-host AI question matters now

The question matters because AI adoption has moved past curiosity. Businesses are testing AI in support, sales, operations, document processing, coding, analytics, search, training, compliance review, and internal knowledge work. At the same time, many teams are still struggling to turn pilots into reliable business systems.

That creates pressure. Leaders hear that AI is becoming unavoidable. Technical teams hear that sensitive data should never leave company-controlled environments. Finance teams see token costs and wonder whether local models would be cheaper. Security teams worry about data exposure. Product teams want speed. Executives want control.

Those concerns are valid. But they do not all produce the same answer.

A business does not need to self-host AI just because it wants privacy. It may need stronger vendor terms, data classification, retention controls, private networking, human review, access governance, or a different workflow design. A company does not need to self-host AI just because cloud APIs cost money. It may need better prompts, smaller outputs, caching, model routing, batching, or lower-volume use cases. A team does not need to self-host AI just because open-weight models are improving. It may still lack the engineering capacity to operate them securely.

The economics are also changing quickly. Stanford’s 2025 AI Index reported a sharp decline in inference cost for systems performing at roughly GPT-3.5 level. That does not mean every cloud model is cheap or every workload should use an API. It does mean the old assumption that self-hosting is automatically cheaper is weak. You have to compare total cost, not just the absence of a per-token bill.

Total cost includes hardware, cloud GPU rental, engineering time, monitoring, security, updates, backup plans, evaluation, incident response, model serving, latency tuning, and the cost of mistakes.

The common mistake: confusing ownership with control

The most common belief is simple: “If we self-host the model, our data is safe.”

That belief is incomplete.

Self-hosting gives you more direct control over where the model runs. It does not automatically create good security, compliance, reliability, or governance. You still have to manage access, logging, encryption, retention, patching, dependency vulnerabilities, backups, data leakage, prompt handling, output review, model updates, uptime, disaster recovery, and misuse prevention.

In some organizations, self-hosting can be safer because the team has mature infrastructure, clear data classification, strong identity controls, internal security operations, and a real reason to keep workloads inside a controlled environment.

In other organizations, self-hosting can be less safe because the model ends up running on an undersecured server, a developer workstation, an exposed endpoint, or a cloud GPU instance with weak access controls. A managed enterprise AI platform may provide stronger default controls than a hastily self-hosted stack.

That is the uncomfortable truth: privacy is not the same as architecture ownership.

Some major AI platforms now make business data privacy commitments for enterprise or API services, including statements that customer prompts and outputs are not used to train foundation models by default or without permission. That does not make every provider safe for every use case. It does mean businesses should compare actual data flows, terms, retention settings, security controls, and regulatory needs instead of relying on slogans.

The right question is not “cloud or self-hosted?” The right question is “which environment gives this workflow the right balance of control, risk, quality, cost, and operational burden?”

The deployment options are broader than “API or self-hosted”

Many teams say “self-hosting” when they really mean “not using a consumer chatbot.” Those are not the same thing.

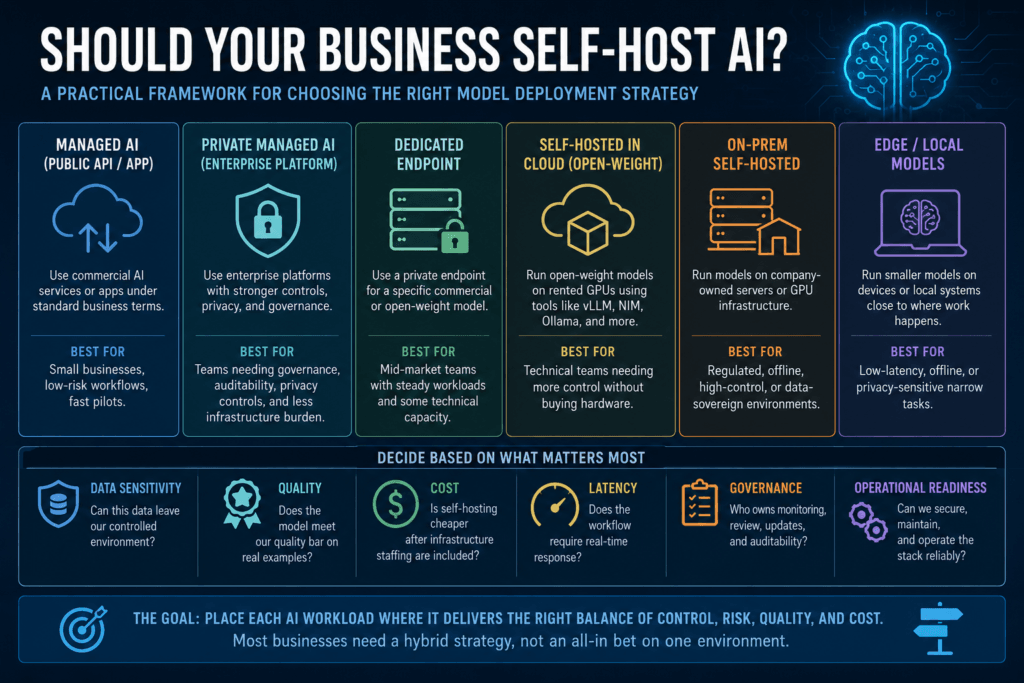

Here is a clearer ladder of options:

| Option | What it means | Best fit |

|---|---|---|

| Public AI app or API | Use a commercial AI tool or API under standard business terms | Small businesses, low-risk workflows, fast pilots |

| Enterprise or private managed AI | Use platforms such as Azure AI, Amazon Bedrock, Google Vertex AI, or enterprise AI services with stronger controls | Teams needing governance, auditability, privacy controls, and less infrastructure burden |

| Dedicated hosted endpoint | Use a private endpoint for a specific commercial or open-weight model | Mid-market teams with steady workloads and some technical capacity |

| Cloud-hosted open-weight model | Run models on rented GPUs using tools such as vLLM, NVIDIA NIM, Ollama, or similar infrastructure | Technical teams needing more control without buying hardware |

| Local workstation or small server | Run smaller models locally for experimentation or limited internal tasks | Prototyping, developer workflows, offline drafting, sensitive local testing |

| On-prem self-hosted model | Run models on company-owned servers or GPU infrastructure | Regulated, offline, high-control, or data-sovereign environments |

| Edge/local model | Run smaller models on devices, laptops, or local systems close to where work happens | Low-latency, offline, or privacy-sensitive narrow tasks |

| Hybrid model routing | Route different tasks to different models and environments based on risk, cost, and quality | Mature teams with multiple AI workloads |

For many businesses, the best long-term strategy is not one environment. It is a routing strategy.

Use managed frontier models where quality matters most. Use private managed environments where governance matters. Use smaller open-weight models where the task is narrow and repeatable. Use local models where data sensitivity, offline access, or latency justifies it. Keep high-risk decisions human-reviewed.

That is more realistic than declaring one model or one hosting method as the universal answer.

Common belief vs. production reality

| Common Belief | Production Reality | Better Question |

|---|---|---|

| Self-hosting means our data is safe. | Self-hosting shifts security responsibility to your team. | Do we have the controls to operate this safely? |

| Local models are free. | The per-token bill may disappear, but hardware, staff, monitoring, and maintenance do not. | What is the total cost per useful outcome? |

| Open-weight means open-source. | Many downloadable models are open-weight, but licensing and training transparency vary. | What does the license actually allow? |

| A smaller model is good enough if it runs locally. | It is good enough only if it meets the quality bar on real business examples. | Has it been evaluated on our workflow? |

| Self-hosting prevents vendor lock-in. | It may reduce one dependency while adding infrastructure, staffing, and model-maintenance dependency. | Which dependency are we actually reducing? |

What small businesses should usually do

Small businesses should usually start with managed AI tools, clear usage rules, and practical workflow controls.

That does not mean pasting confidential client data into consumer tools. It means using appropriate business or API plans, reading data terms, limiting what data is entered, defining what AI can and cannot be used for, and keeping humans in control of consequential outputs.

At a small scale, self-hosting often creates more burden than value. The business may not have enough volume to justify infrastructure. It may not have staff to monitor model quality or secure the stack. It may not have enough use cases to justify maintaining a custom deployment. It may not even know which workflows are worth automating yet.

A better first step is to identify a narrow, valuable, low-risk workflow: summarizing internal notes, drafting first-pass content, classifying support requests, extracting fields from standard documents, creating internal search helpers, or preparing structured handoffs for human review.

Then measure quality, time saved, error rate, cost, latency, and human correction effort.

If the workflow creates value and begins to scale, then revisit hosting.

What mid-market teams should consider

Mid-market companies often need a hybrid approach.

They may have enough volume for costs to matter, enough sensitive data for privacy to matter, and enough operational complexity for governance to matter. But they still may not need full on-prem self-hosting.

This is where private managed AI and dedicated endpoints can make sense. A team might use an enterprise AI platform for sensitive workflows, a public API for low-risk drafting, a cloud-hosted open-weight model for high-volume classification, and human review for regulated or customer-impacting decisions.

The strongest mid-market self-hosting candidates are usually narrow, repetitive tasks with measurable outputs:

- document classification;

- field extraction;

- tagging and routing;

- internal summarization;

- knowledge-base search support;

- normalization of messy text;

- draft generation with human approval;

- bulk enrichment where latency is less important than throughput.

These tasks are easier to evaluate than broad autonomous reasoning. They also benefit from model routing. A smaller self-hosted or dedicated model can handle routine cases while harder, ambiguous, or higher-risk cases escalate to a stronger model or a person.

The mistake is using self-hosting as a blanket strategy before the organization understands which tasks are actually stable enough to own.

What large enterprises and regulated organizations should do

Large enterprises have a different problem. They may have data residency requirements, security teams, procurement controls, vendor review processes, legal obligations, internal platforms, and enough volume to make infrastructure economics meaningful.

For them, self-hosting may be justified. But even then, it should not be treated as the only serious option.

A mature enterprise AI strategy often looks like governed model placement. Some workloads use frontier managed models. Some use private cloud. Some use dedicated endpoints. Some use open-weight models in controlled infrastructure. Some remain on-prem. Some are rejected because the risk, quality, or integration burden is not worth it.

The decision should be made at the workflow level.

A low-risk internal brainstorming assistant does not need the same controls as an AI system that summarizes medical records, reviews contracts, updates customer accounts, suggests financial decisions, or triggers operational actions. A knowledge search assistant with human review does not need the same architecture as an automated claims-processing workflow.

The more consequential the output, the more the architecture needs validation, logging, escalation, monitoring, access controls, and human accountability.

The self-hosting stack is not just the model

Technical teams know this, but business conversations often skip it: a model file is not a production system.

If you self-host AI, you need a serving layer, hardware or GPU capacity, storage, networking, authentication, authorization, logging, monitoring, rate limiting, evaluation, alerting, security patching, secrets management, backup procedures, deployment workflows, and an incident plan.

Tools such as Ollama can make local experimentation easier. vLLM can serve models through an OpenAI-compatible HTTP server. Hugging Face Text Generation Inference has been used for model serving and includes production-oriented features, though Hugging Face now describes TGI as being in maintenance mode and points toward newer inference engines such as vLLM and SGLang. NVIDIA NIM provides optimized inference microservices for teams working in NVIDIA-centered environments.

Those tools are useful. They do not remove the need for operations.

This is where many self-hosting plans fail. The team compares model API pricing against GPU rental or hardware pricing and stops there. That is incomplete. The real comparison is managed service cost versus the cost of running a reliable AI system.

For a prototype, that cost may be small. For production, it grows quickly.

Be precise about “open-source” and “open-weight”

Businesses should also be careful with terminology.

Many models are called open-source in casual conversation when they are more accurately described as open-weight. The distinction matters because licenses affect commercial use, redistribution, modification, compliance, and long-term risk.

The Open Source Initiative’s Open Source AI Definition emphasizes freedoms to use, study, modify, and share, along with access to the preferred form for making modifications. Many popular model releases do not meet every expectation a software team may associate with open source.

That does not make those models useless. It means businesses should review the license and documentation before building strategy around them.

Llama models, for example, are widely used and valuable, but they are governed by Meta’s community license rather than a standard permissive open-source license. Mistral and Qwen have released open-weight models under Apache 2.0 terms for many model families. DeepSeek and other model families may be useful in some contexts, but organizations should review exact licenses, security posture, geopolitical concerns, vendor policies, and client expectations before adoption.

The practical rule is simple: do not build a commercial AI deployment on a model license you have not read.

The better mental model: model placement by risk and workload

A useful mental model is model placement.

Do not ask, “Are we a self-hosted AI company or a cloud AI company?”

Ask, “Where should this specific workload run?”

A support-ticket classifier may be cheap, repetitive, and low-risk enough for a smaller model. A board-level strategy analysis may need a stronger managed model. A sensitive document workflow may require private managed infrastructure or on-prem deployment. A field technician’s offline assistant may need an edge model. A high-risk compliance workflow may need human approval no matter where the model runs.

The deployment strategy should follow the workload.

A practical decision process looks like this:

| Decision Area | What to Ask | What to Measure |

|---|---|---|

| Data sensitivity | Can this data leave our controlled environment under our policies and contracts? | Data class, retention needs, access scope |

| Quality | Does the model meet the business quality bar on real examples? | Accuracy, accepted-output rate, correction rate |

| Cost | Is self-hosting cheaper after infrastructure and staff are included? | Cost per useful outcome |

| Latency | Does the workflow require real-time response? | p50 and p95 latency |

| Reliability | What happens when the model fails? | Failure rate, retry rate, escalation rate |

| Governance | Who owns monitoring, review, and updates? | Auditability, logs, policy coverage |

| Customization | Do we need fine-tuning, adapters, or domain-specific behavior? | Improvement over prompting and retrieval |

| Operational readiness | Can we secure and maintain the stack? | Staffing, incident response, patch cadence |

The most important metric is not cost per model call. It is cost per useful, accepted, business-safe outcome.

When self-hosting makes sense

Self-hosting becomes more attractive when several conditions are true at the same time.

The workload is high-volume and predictable. The data is sensitive enough that controlled infrastructure matters. The task is narrow enough to evaluate. The business has technical staff who can operate the system. Latency, offline access, or data residency materially affects the outcome. The organization can measure quality and detect failure. The cost of managed services is high enough to justify the operational burden.

That is a real case for self-hosting.

But the bar should be explicit. “We want control” is not enough. Control over what? Data location? Model weights? Cost curve? Uptime? Customization? Vendor contracts? Latency? Each form of control has a different cost.

If the business cannot name the control it needs, it is probably not ready to self-host.

When self-hosting is premature

Self-hosting is usually premature when the company is still experimenting, does not have a clear use case, cannot evaluate output quality, has no owner for security and maintenance, has low AI usage, or is mainly reacting to a vague sense that cloud AI is unsafe.

It is also premature when the team needs best-in-class reasoning, coding, multimodal support, tool use, or complex agent behavior but lacks the infrastructure to serve and evaluate comparable models. Open-weight models are improving, but model capability still varies by task, size, deployment setup, quantization, context length, and evaluation method.

A local model that gives confident wrong answers is not a privacy win. It is just a private failure.

Practical guidance for leaders and builders

Leaders should fund workflow discovery before infrastructure. Start with the business process, not the hosting method. Identify which workflows are frequent, language-heavy, measurable, and safe enough to pilot. Define the quality bar before comparing models. Require teams to measure latency, cost, correction rate, and exception handling.

Decision makers should ask vendors and internal teams practical questions: What data is sent? Where is it stored? Is it used for training? How is access controlled? What happens on failure? Who reviews outputs? What is logged? How are models evaluated? What is the rollback plan?

Technical teams should test candidate models on real examples, not generic demos. They should separate retrieval quality from generation quality, use structured outputs where appropriate, define human checkpoints, monitor drift, and avoid turning every task into an open-ended agent loop.

Product teams should pilot workflows where AI assists a clear decision or reduces repetitive work. Avoid starting with high-risk autonomy. The first production win should be boring enough to operate and valuable enough to measure.

Self-hosting is a placement decision

Self-hosting AI is not a sign that a business is advanced. It is a sign that the business has chosen to own more of the AI operating burden.

Sometimes that is exactly the right move. A company with sensitive data, predictable volume, technical staff, clear evaluation, and real infrastructure needs may benefit from self-hosted or privately hosted models. But for many businesses, the better first move is a managed AI platform with strong data rules, human review, and workflow measurement.

The future is not simply cloud versus self-hosted. The future is model placement by risk, cost, capability, and control.

The business that wins will not be the one that declares one hosting strategy superior in every case. It will be the one that knows which work belongs where.

Key Takeaways

- The right question is not “Should we self-host AI?” but “What are we trying to control?”

- Self-hosting can improve control, but it also shifts security, reliability, monitoring, and maintenance responsibility to your team.

- Managed enterprise AI platforms may be safer and more practical than poorly operated self-hosted systems.

- Open-weight models are useful, but not every downloadable model should be called open-source.

- Small businesses should usually start with governed managed AI tools and clear workflow rules.

- Mid-market teams should consider hybrid approaches, private endpoints, and selective self-hosting for narrow, measurable workloads.

- Enterprises should make model placement decisions by data sensitivity, risk, latency, compliance, and operational readiness.

- The best metric is cost per useful, accepted business outcome, not cost per model call.

Practical Decision Framework

Use this framework before deciding to self-host AI:

- Define the workload.

Do not evaluate hosting in the abstract. Name the workflow: support triage, document extraction, internal search, code review, contract analysis, sales summarization, or something else. - Classify the data.

Decide whether the workflow includes public, internal, confidential, regulated, client-sensitive, or legally restricted data. - Set the quality bar.

Test candidate models on real examples. Measure accepted outputs, correction rate, hallucination risk, and reviewer effort. - Calculate total cost.

Compare managed AI cost against hardware, GPU rental, engineering time, monitoring, security, support, downtime, and incident response. - Choose the least burdensome safe architecture.

Use managed AI when it satisfies the risk profile. Use private managed AI when governance matters. Use self-hosting only when the control gained is worth the burden. - Route by risk.

Not every request should go to the same model. Use smaller models for narrow routine work, stronger models for difficult cases, and humans for high-risk decisions. - Instrument before scaling.

Log latency, cost, model version, prompt version, validation failures, human corrections, and final business outcomes.

FAQ

Should small businesses self-host AI?

Usually not at first. Most small businesses are better served by managed AI tools, clear data rules, human review, and narrow workflow pilots. Self-hosting becomes more relevant when volume, privacy, latency, or client requirements justify the added responsibility.

Is self-hosting AI cheaper than using an API?

Not automatically. Self-hosting may reduce per-token charges, but it adds infrastructure, engineering, monitoring, security, maintenance, and reliability costs. The better metric is total cost per useful business outcome.

Does self-hosting AI guarantee privacy?

No. Self-hosting gives you more direct control over the environment, but privacy depends on access controls, encryption, logging, retention, security practices, patching, and governance. A poorly secured self-hosted deployment can be riskier than a properly governed managed platform.

What is the difference between open-source and open-weight AI models?

Open-weight models provide access to model weights, but they may not provide the full freedoms or preferred modification materials associated with open-source AI. Businesses should review the license, permitted uses, redistribution terms, and compliance obligations before deploying any model commercially.

When does self-hosting AI make sense?

It makes sense when a business has sensitive data, predictable high-volume workloads, strong technical staff, clear evaluation methods, operational readiness, and a specific need for local control, offline operation, low latency, customization, or data residency.

What is the best alternative to self-hosting AI?

For many businesses, the best alternative is private or enterprise managed AI with clear data controls, auditability, workflow governance, and human review. Hybrid model routing is often the most mature long-term approach.

Sources

- McKinsey & Company: https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- Stanford HAI 2025 AI Index Report: https://hai.stanford.edu/ai-index/2025-ai-index-report

- NIST AI Risk Management Framework: Generative Artificial Intelligence Profile: https://www.nist.gov/publications/artificial-intelligence-risk-management-framework-generative-artificial-intelligence

- OpenAI Enterprise Privacy: https://openai.com/enterprise-privacy/

- Amazon Bedrock Security and Privacy: https://aws.amazon.com/bedrock/security-compliance/

- Microsoft Azure AI Data Privacy: https://learn.microsoft.com/en-us/azure/foundry/responsible-ai/openai/data-privacy

- Google Cloud Vertex AI Zero Data Retention and Training Restriction: https://docs.cloud.google.com/vertex-ai/generative-ai/docs/vertex-ai-zero-data-retention

- Ollama Quickstart Documentation: https://docs.ollama.com/quickstart

- vLLM OpenAI-Compatible Server Documentation: https://docs.vllm.ai/en/latest/serving/openai_compatible_server/

- Hugging Face Text Generation Inference Documentation: https://huggingface.co/docs/text-generation-inference/index

- NVIDIA NIM Microservices: https://www.nvidia.com/en-us/ai-data-science/products/nim-microservices/

- Open Source Initiative Open Source AI Definition: https://opensource.org/ai/open-source-ai-definition

- Llama 3.1 Community License Agreement: https://www.llama.com/llama3_1/license/

- Mistral AI Models: https://mistral.ai/models

- Qwen3 GitHub Repository: https://github.com/QwenLM/qwen3

- DeepSeek Model Mechanism and Training Methods: https://cdn.deepseek.com/policies/en-US/model-algorithm-disclosure.html

Related articles from Kyle Beyke

- AI Model Selection: Powerful Guide for Smart Business AI: https://beykeworkflows.com/ai-model-selection-business-ai-guide/

- AI Cost Control: Smart Guide for Efficient Systems: https://beykeworkflows.com/ai-cost-control-systems-tokens-latency-routing/

- AI Workflow Anatomy: Essential Guide for Business: https://beykeworkflows.com/ai-workflow-anatomy-business-guide/

- AI Use Cases: 7 Smart Rules for Business: https://beykeworkflows.com/ai-use-cases-rules-business/